AR + SOUND

XR in all its shapes has started to become more part of our daily life. We are overlaying navigation, capturing pokemon and testing new sunglasses without thinking too much about the underlying technology.

A couple of years ago one of the large silicon valley tech companies asked us at IDEO to explore what an AR technology anchored in sound, not the traditional visual first approach, would be like. What would the UX be? What was the core experience? What form factors would be desired?

How might we design a desirable wearable device that augments the physical world with the social web?

Below I'm sharing a couple of snippets of that exploration.

2017

MY ROLE

Prototyping

UX design

What does an audio-first AR experience sound like?

Augmented hearing isn't new

We have over many centuries explored different ways to augment our ears. From simple cones to focus better to more refined hearing aids and today's cochlear implants. So trying to give ourselves superpowers for the ears isn't as new as it might be for our eyes or other senses.

That said, one of the big differences with today is that we can decouple the creation of sound from time and place. We do that also through our phones but with always-on, and always-listening devices dedicated to augmenting our lives with a hidden layer of sound we can create new experiences that is more intimate and designed first hand for our ears.

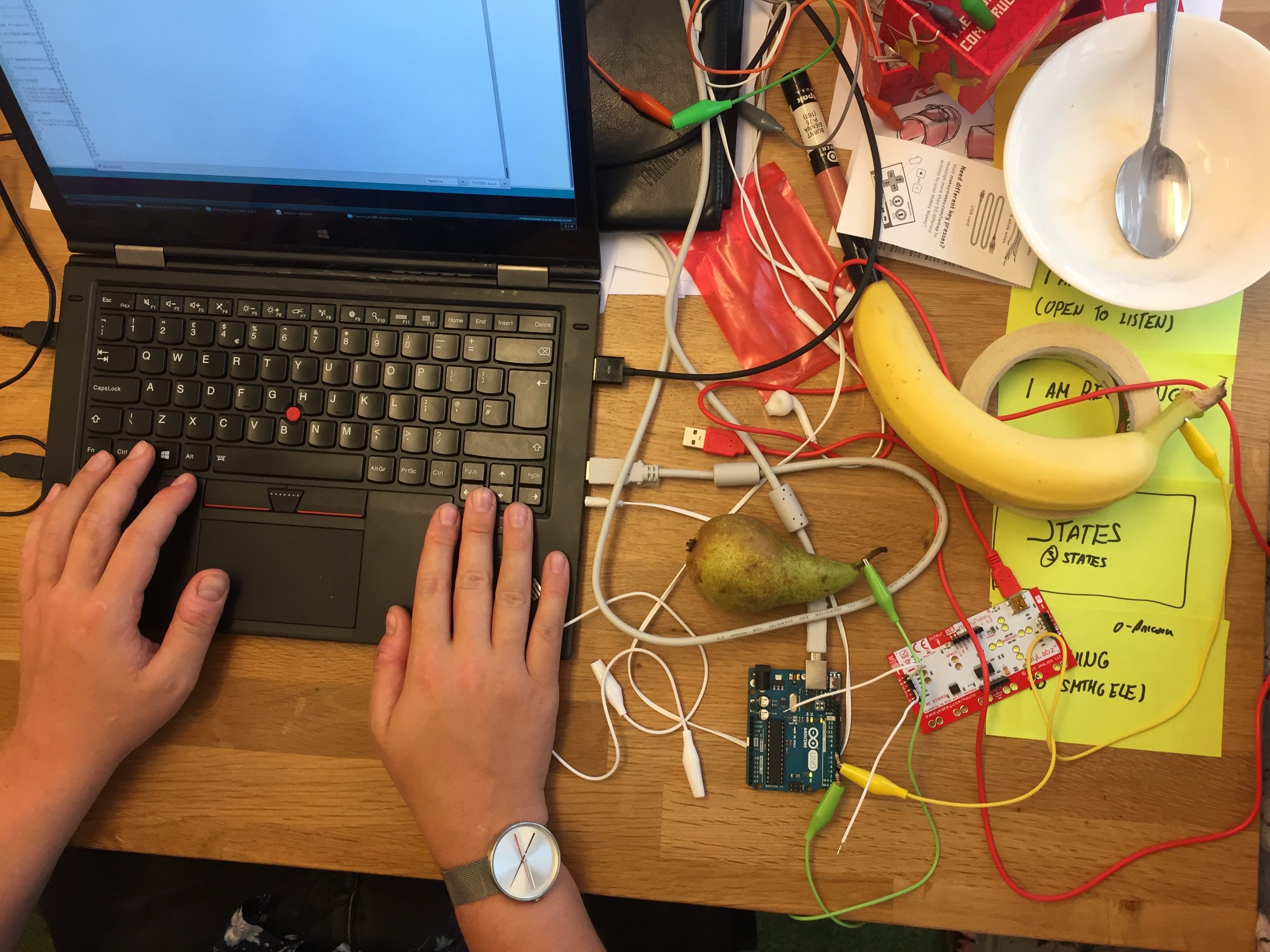

Exploring through making

Even though sound is a very integral part of our lives we quickly found that most people lack the vocabulary to talk about it and had a hard time imagining different experiences. So to overcome that hurdle we took a prototyping approach where we built a large set of small experiments of what new experiences for a sound first AR experience would feel like.

Research to evolve

The most promising of our prototypes we brought to a set of individuals that all had different forms of "extreme" relationship with sound. We had discussions with people that were visually impaired, music producers and "golden ears". Through those discussions, we learnt what worked, what was creepy and what would be exciting to augment their daily life.

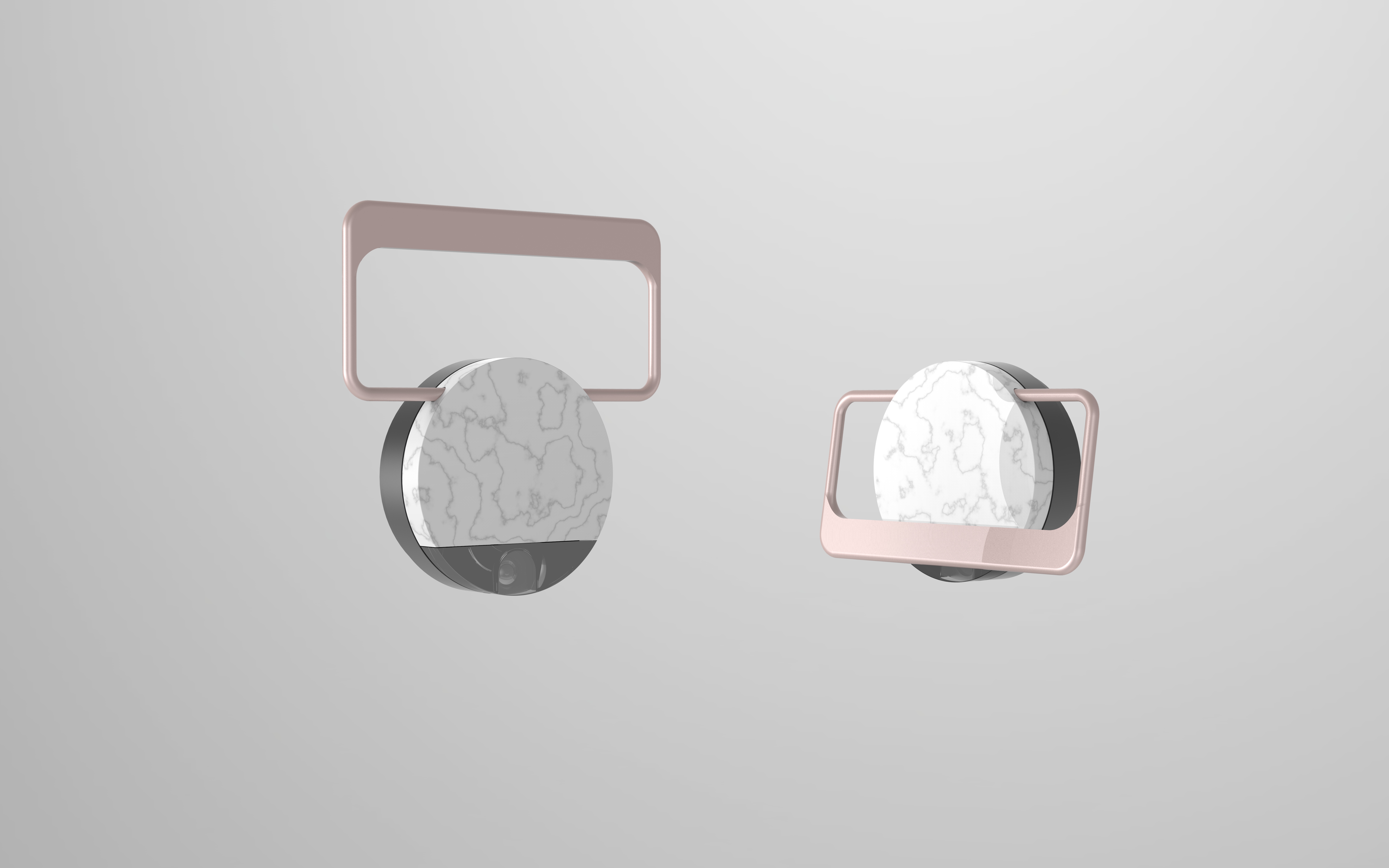

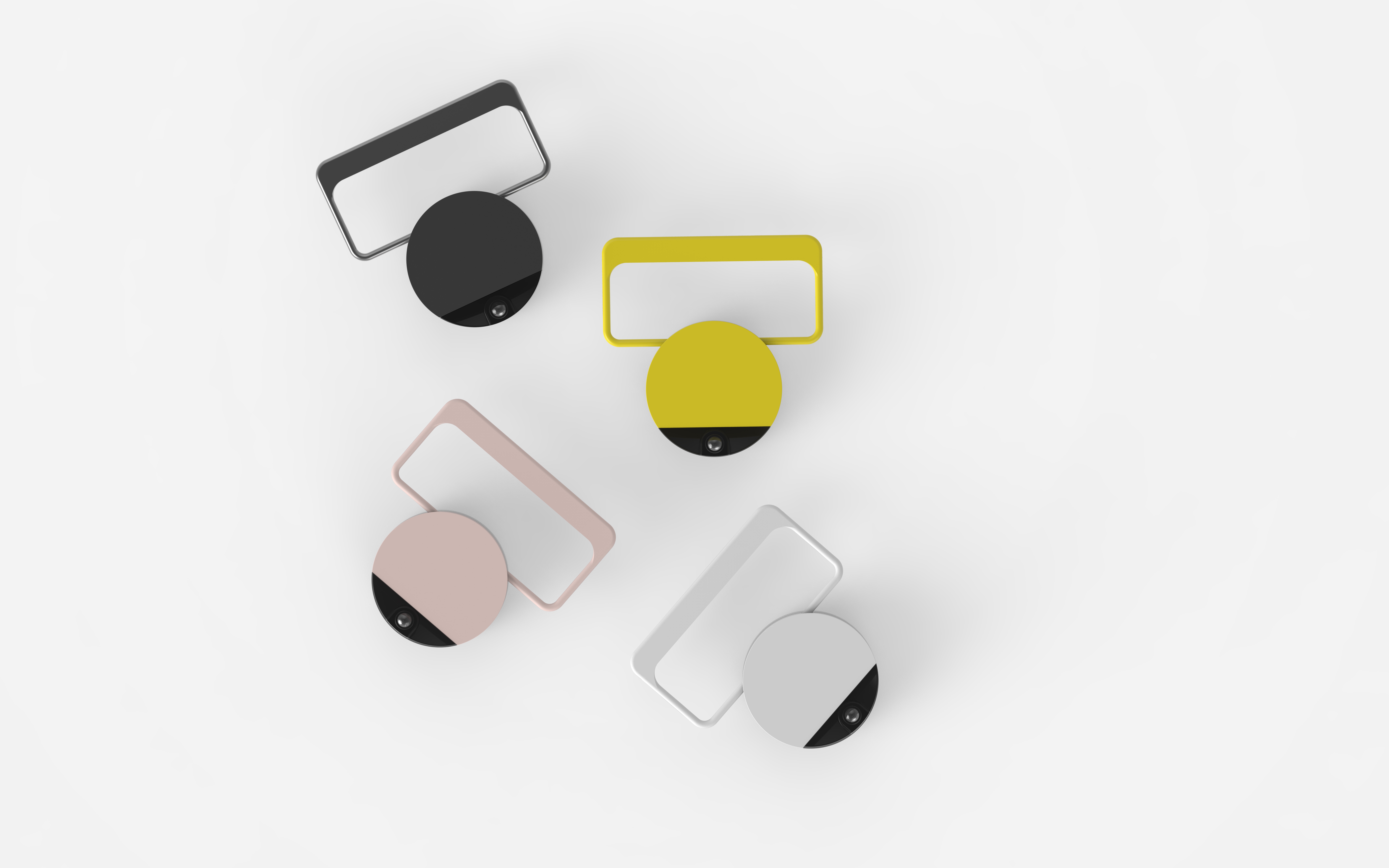

We also shared early form factors of what a device could look like to better understand what is socially acceptable to carry with you on the body, what signals it communicates and how important the overall form was.

Exploring the form

Throughout the whole research, one feedback was very clear which was the ambiguity of the status of the audio experience for the outside viewer. The form of the device needed to be able to clearly articulate this status to allow people to understand if someone was "connected" to this hidden audio first world or open for connection with the people around them.

For this exploration, we paired with an industrial design firm called Instrument industries which worked alongside the IDEO team to explore different potential form factors based on the research findings and the hardware requirements to deliver the experiences. Together we landed on a design language inspired by jewellery that merged both the ability to communicate its status and the desire to be worn.

Honing the experience

As we learned more we started to hone in on a set of form factors and UX that was both desirable for our users and had enough exploration regarding feasibility to be worth taking seriously. We landed in two main UX directions which we called Narnia and Maga.

Narnia was all about transporting and connecting you with another location while Maga was about exploring a hidden world of sound around you. From a confidentiality perspective I can't share more details on the process we took bringing these concepts forward.

Where we landed

The final designs and recommendations were used as input into a larger initiative on positioning the company better for an AR-driven future. The hypotheses and insights are being used across their teams to guide decision-making and to inform the next generation of AR experiences.